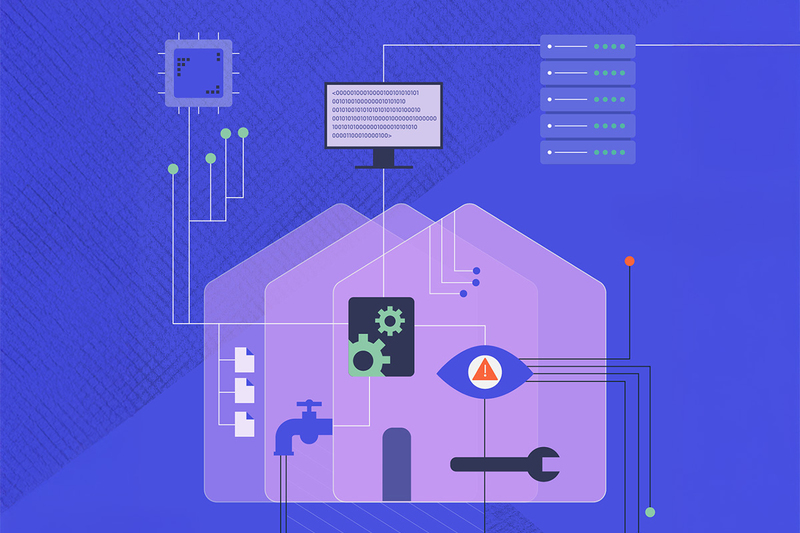

Board Member Briefing: is your organisation AI-ready? Is it already too late?

AI has the potential to save organisations huge time and money, but there is also potential for mistakes, misuse and legal ramifications. With many staff likely already utilising AI in their work, how can boards make sure it is being used properly? Hannah Fearn reports. Illustration by Daniella Ferretti

Subscriber only content

This is exclusive to subscribers only.

To view, subscribe here or login below.

Related stories